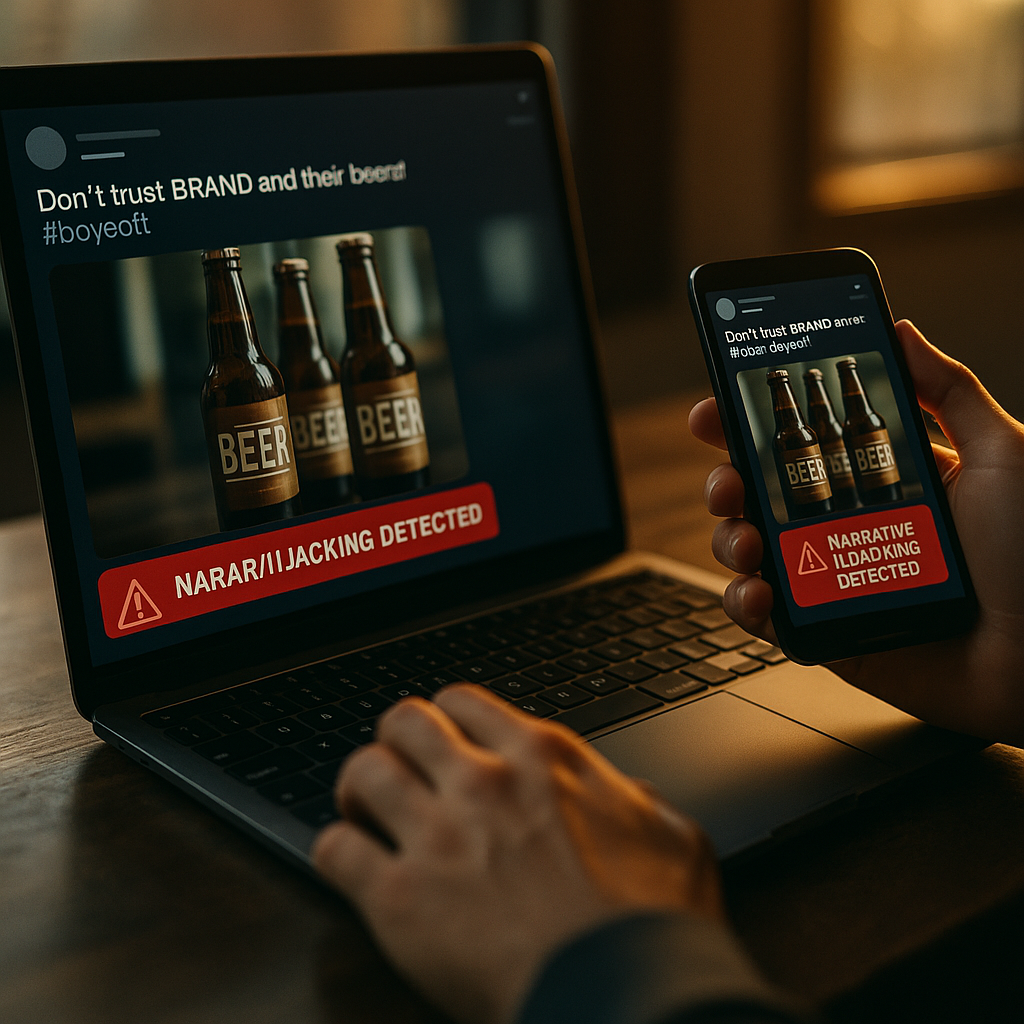

In 2025, brand stories spread at machine speed, and so do distortions. AI Powered Narrative Hijacking Detection helps marketers spot when bad actors, competitors, or misinformed communities reshape your message across social, search, and AI answers. This article explains how narrative hijacking works, how AI detection systems flag it early, and what to do next—before a false story becomes your audience’s truth.

What Is Narrative Hijacking and Why It Threatens brand protection

Narrative hijacking happens when a third party reframes your brand story—your mission, values, product claims, or customer experience—into a different narrative that benefits them or harms you. Unlike normal criticism, hijacking often uses coordinated repetition, selective editing, and emotionally charged framing to steer perception quickly.

In 2025, the risk is higher because content travels through more intermediaries:

- Social algorithms amplify high-engagement claims, including misleading ones.

- Search and AI-generated answers may summarize or remix widely repeated statements, even when the original sources are weak.

- Low-cost generative media enables floods of “evidence-like” posts—screenshots, short videos, fabricated testimonials—at scale.

Brands typically notice a hijack after symptoms appear: sudden spikes in negative mentions, customer support tickets referencing a rumor, or sales objections tied to a new “story” about your company. By then, you are in reaction mode.

Effective brand protection requires earlier detection: identifying when a narrative begins to shift, which communities are driving it, what claims are central, and which channels are accelerating it. That is precisely where AI-based detection becomes practical.

How AI narrative monitoring detects story drift across channels

AI narrative monitoring uses machine learning and natural language processing to track themes, claims, sentiment, and influential accounts across open web pages, news, forums, social platforms, video transcripts, and review sites. The goal is not just “listening,” but understanding how meaning changes over time.

High-performing systems typically combine these capabilities:

- Claim and topic clustering: Groups posts that express the same allegation or storyline using semantic similarity, even when wording differs.

- Narrative trajectory tracking: Measures how a topic evolves—e.g., from a question (“Is this true?”) to an assertion (“They did it.”) to a call to action (“Boycott them.”).

- Entity and relationship mapping: Connects your brand, product names, executives, partners, and competitors to the narratives being discussed.

- Anomaly detection: Flags unusual surges in volume, velocity, or coordinated posting patterns that suggest manipulation.

- Source credibility signals: Weighs signals such as first-seen origin, account history, bot-likelihood, and link quality to prioritize risk.

To make this actionable, detection must be aligned with your specific brand story. That means defining “truth anchors” (official claims, policies, certifications, warranties, pricing rules) and “sensitive narratives” (safety, privacy, labor practices, sustainability, returns, health claims). When monitoring is tuned to these anchors, the system can identify story drift: small shifts in wording that alter meaning.

Reader follow-up you might be asking: “Isn’t this just social listening?” Not exactly. Traditional listening counts mentions and sentiment; narrative monitoring identifies the underlying claims, how they spread, and how they mutate—so you can intervene with precision instead of generic PR statements.

Common brand story attacks and what AI catches early

Most hijacks follow recognizable patterns. Knowing them helps you train detection models and prepare response playbooks.

1) Claim inversion

A true statement is flipped into a damaging one. Example: “We do not sell customer data” becomes “They sell your data” through cropped screenshots, paraphrases, or “a friend who works there” posts. AI helps by tracking paraphrase clusters and identifying where the inversion first appeared.

2) Context stripping

A quote or clip is removed from its original context, changing its intent. AI catches this by linking media mentions to original sources, detecting partial quotes, and noting when a clip spreads without the surrounding clarifications.

3) Evidence laundering

Unverified claims are repeatedly cited until they look credible: “Everyone says…” or “Multiple reports confirm…” AI detects circular citations, low-quality domains cross-linking each other, and sudden growth in copy-pasted phrasing.

4) Fake customer proof

Fabricated reviews or testimonials appear across platforms, often with similar language. AI can cluster near-duplicate text, detect stylometric similarities, and correlate posting times.

5) Competitor framing

Your product is compared using misleading benchmarks. AI identifies the comparative narrative (“Brand A is unsafe; Brand B is certified”) and surfaces which claims are missing citations or rely on misused standards.

6) Synthetic media amplification

Short videos, voice clips, or “leaked memos” spread quickly. Detection combines transcript analysis, document forensics signals (when available), and propagation patterns to prioritize verification.

What AI catches early is not “truth” by itself; it catches risk patterns: coordinated spread, rapid mutation, and high-impact claims aimed at your core trust drivers. Human review then verifies the facts and decides on response.

Building misinformation detection workflows that teams can act on

Detection is only valuable if your organization can respond fast, consistently, and credibly. In 2025, the strongest approach is an end-to-end workflow that connects monitoring to verification, decision-making, and publishing.

Step 1: Define narrative assets and red lines

- Narrative assets: Your mission statement, origin story, quality commitments, certifications, executive bios, flagship product claims.

- Red lines: Safety incidents, legal allegations, fraud claims, privacy breaches, discrimination claims, and any regulated claims.

This gives AI a reference map and gives your team a common language for triage.

Step 2: Establish a verification loop

Create a standard “claim verification packet” that includes: the exact claim, first-seen source, top amplifiers, evidence provided, evidence missing, internal SMEs to consult, and recommended response options. This prevents slow, ad-hoc debates while the narrative grows.

Step 3: Prioritize by harm and spread

Not every negative post deserves a response. Use a simple scoring model:

- Harm: Could it affect safety, legal exposure, or core trust?

- Reach: Is it moving into mainstream channels or high-intent search?

- Velocity: Is it accelerating hour-over-hour?

- Stickiness: Does it fit a pre-existing stereotype about your category?

Step 4: Prepare response playbooks by narrative type

Playbooks should include approved language, supporting links, on-record spokespeople, and escalation rules. For regulated industries, route through compliance automatically when red lines are triggered.

Step 5: Measure resolution, not just sentiment

Track whether the core claim declines in prevalence, whether corrected content is being cited, and whether search/AI summaries reflect the correction. Resolution metrics beat vanity metrics.

If you are wondering, “Who owns this?” The most effective setups use a cross-functional narrative risk council: comms, legal, customer support, security, product, and a senior decision-maker who can approve actions quickly.

Practical reputation management tactics to protect your narrative in search and AI answers

In 2025, reputation is shaped not only by what people post, but by what systems summarize. Protecting your brand story means strengthening authoritative sources and making them easy to retrieve, quote, and verify.

1) Publish “truth anchors” that are citation-friendly

- Policy pages (privacy, returns, warranties) written clearly, with version notes.

- Evidence pages for claims: test methods, certifications, lab partners, and limitations.

- Incident pages for known issues: what happened, scope, customer impact, and remediation steps.

These pages should be stable, specific, and updated promptly. AI systems and journalists prefer sources that are precise and consistent.

2) Strengthen your “about” and leadership footprint

EEAT-aligned content includes clear ownership: who you are, who is responsible for claims, and how to contact you. Add SME bios tied to relevant content, and ensure spokespeople have consistent profiles across channels.

3) Convert corrections into multiple formats

When a claim is false or misleading, publish a short, quotable correction plus a deeper explainer. Repurpose into:

- Customer support macros for consistent replies

- Short Q&A posts for social

- Media statement with verified facts

- FAQ updates that answer the exact phrasing people are repeating

4) Use targeted engagement instead of broad rebuttals

AI detection should tell you which communities and creators are driving the narrative. Engage where it matters: the thread that seeded the claim, the video that normalized it, or the forum where purchase decisions happen. Broad “we deny this” posts often amplify the rumor.

5) Prepare for “search first” and “AI answer first” customer behavior

Your team should monitor high-intent queries tied to the hijacked narrative, then publish content that answers them directly. If customers are asking “Is Brand X a scam?” a generic homepage update won’t help; a clear, evidence-based page that addresses the question will.

Reputation management also includes internal readiness. If your support team learns about the rumor before marketing does, you need a feedback channel that automatically routes emerging claims back into the detection and verification pipeline.

Choosing AI brand safety tools and proving they work

Tool selection fails when teams buy dashboards instead of outcomes. In 2025, evaluate AI brand safety systems based on how well they detect, explain, and operationalize narrative threats.

Key evaluation criteria

- Coverage: Which platforms, languages, and media types are included? Can it analyze video and audio transcripts?

- Explainability: Can the system show why a cluster is connected and which posts are central?

- First-seen and propagation mapping: Does it identify origin points and top amplifiers?

- Customization: Can you define sensitive narratives, products, executives, and competitor comparisons?

- Alert quality: Does it reduce noise with risk scoring and thresholds?

- Workflow integrations: Can it open tickets, notify Slack/Teams, and feed a case-management process?

- Security and governance: Data handling, access controls, audit logs, and retention policies.

How to run a proof of value

- Back-test against one or two real past incidents: did it detect early signals?

- Simulate a narrative drift scenario: can the team move from alert to verified response in hours, not days?

- Measure reduction in time-to-awareness, time-to-triage, and time-to-publish correction.

EEAT note: AI should not be the final judge of truth. Your process should document human verification, cite primary sources, and keep a clear separation between detection, analysis, and official statements. That combination—transparent sourcing plus accountable decision-making—is what builds durable trust.

FAQs

What is narrative hijacking in marketing?

Narrative hijacking is when external actors reshape your brand story into a different, often damaging storyline by repeating altered claims, removing context, or manufacturing “evidence.” It goes beyond normal criticism because it is designed to redirect perception and decision-making at scale.

How does AI detect narrative hijacking?

AI detects hijacking by clustering semantically similar claims, tracking how narratives evolve, identifying unusual surges and coordinated amplification, and mapping who spreads the story across channels. It flags risk patterns early, then your team verifies facts and decides on action.

Is AI narrative monitoring the same as social listening?

No. Social listening focuses on mentions and sentiment. AI narrative monitoring focuses on the underlying claims, how they mutate, and which sources drive propagation, making it more useful for targeted corrections and crisis prevention.

What should a brand do first when a false story starts spreading?

Capture the exact claim and its variants, identify first-seen sources and top amplifiers, verify the facts internally, and publish a concise correction with supporting evidence. Then deploy consistent responses through support, social, and search-focused content where customers are asking questions.

How can we protect our brand story in AI-generated answers?

Maintain citation-friendly “truth anchor” pages, keep policies and claims specific and updated, publish direct Q&A content matching real query phrasing, and ensure clear authorship and accountability signals. Monitor high-intent queries tied to the narrative and update content as the story evolves.

Who should own narrative hijacking response inside a company?

A cross-functional team works best: communications leads messaging, legal manages risk, customer support reports real-time objections, product and security validate facts, and an empowered executive approves actions quickly. Clear escalation rules prevent delays.

AI-powered detection works best when it strengthens—not replaces—your accountability. Use AI to spot story drift early, verify claims with documented evidence, and publish clear corrections that customers and systems can cite. Build truth anchors, align teams around a repeatable workflow, and engage where narratives originate. Protecting your brand story in 2025 is a discipline: detect, verify, respond, and measure what changes.

Top Influencer Marketing Agencies

The leading agencies shaping influencer marketing in 2026

Agencies ranked by campaign performance, client diversity, platform expertise, proven ROI, industry recognition, and client satisfaction. Assessed through verified case studies, reviews, and industry consultations.

Moburst

-

2

The Shelf

Boutique Beauty & Lifestyle Influencer AgencyA data-driven boutique agency specializing exclusively in beauty, wellness, and lifestyle influencer campaigns on Instagram and TikTok. Best for brands already focused on the beauty/personal care space that need curated, aesthetic-driven content.Clients: Pepsi, The Honest Company, Hims, Elf Cosmetics, Pure LeafVisit The Shelf → -

3

Audiencly

Niche Gaming & Esports Influencer AgencyA specialized agency focused exclusively on gaming and esports creators on YouTube, Twitch, and TikTok. Ideal if your campaign is 100% gaming-focused — from game launches to hardware and esports events.Clients: Epic Games, NordVPN, Ubisoft, Wargaming, Tencent GamesVisit Audiencly → -

4

Viral Nation

Global Influencer Marketing & Talent AgencyA dual talent management and marketing agency with proprietary brand safety tools and a global creator network spanning nano-influencers to celebrities across all major platforms.Clients: Meta, Activision Blizzard, Energizer, Aston Martin, WalmartVisit Viral Nation → -

5

The Influencer Marketing Factory

TikTok, Instagram & YouTube CampaignsA full-service agency with strong TikTok expertise, offering end-to-end campaign management from influencer discovery through performance reporting with a focus on platform-native content.Clients: Google, Snapchat, Universal Music, Bumble, YelpVisit TIMF → -

6

NeoReach

Enterprise Analytics & Influencer CampaignsAn enterprise-focused agency combining managed campaigns with a powerful self-service data platform for influencer search, audience analytics, and attribution modeling.Clients: Amazon, Airbnb, Netflix, Honda, The New York TimesVisit NeoReach → -

7

Ubiquitous

Creator-First Marketing PlatformA tech-driven platform combining self-service tools with managed campaign options, emphasizing speed and scalability for brands managing multiple influencer relationships.Clients: Lyft, Disney, Target, American Eagle, NetflixVisit Ubiquitous → -

8

Obviously

Scalable Enterprise Influencer CampaignsA tech-enabled agency built for high-volume campaigns, coordinating hundreds of creators simultaneously with end-to-end logistics, content rights management, and product seeding.Clients: Google, Ulta Beauty, Converse, AmazonVisit Obviously →