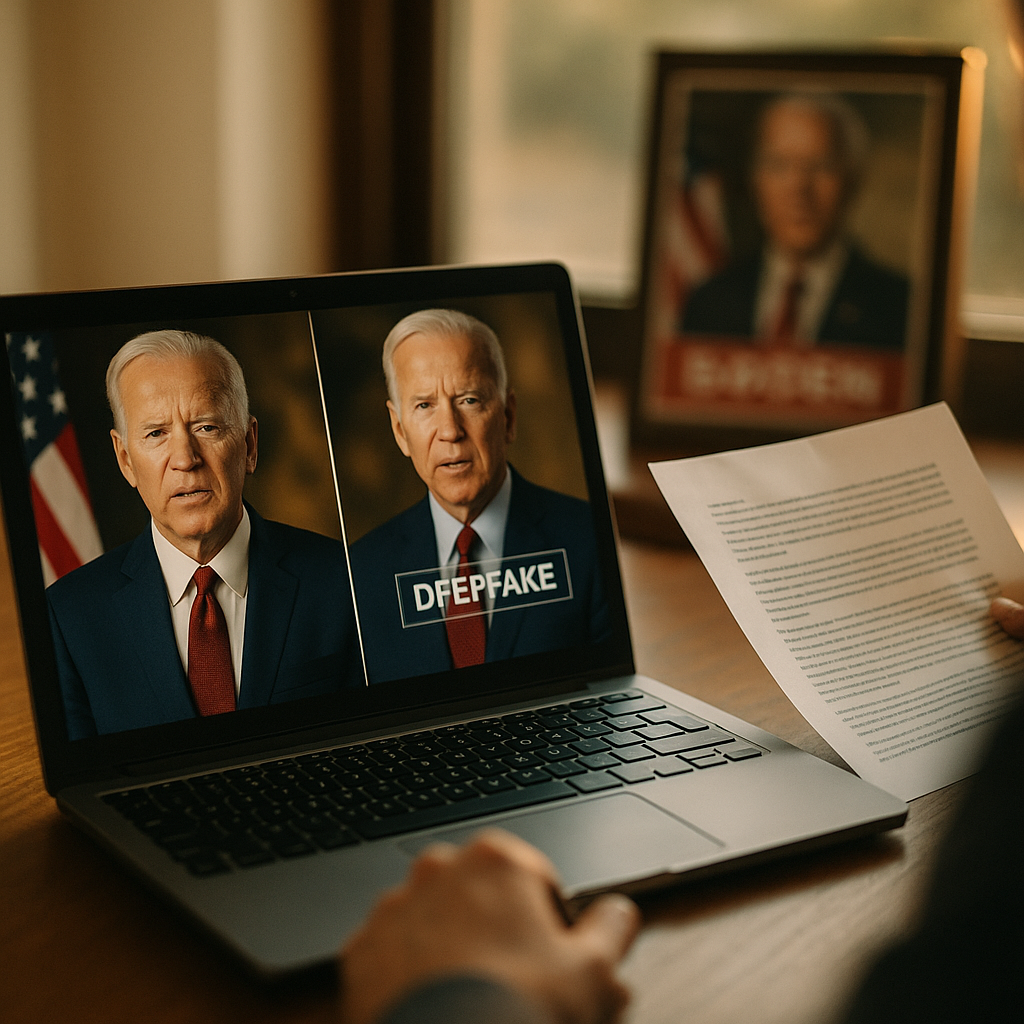

In 2025, political campaigns and advocacy groups face fast-changing expectations around deepfake disclosure rules as synthetic audio, video, and images become easy to produce and hard to spot. Regulators, platforms, and voters all demand transparency without blocking legitimate satire or accessibility tools. This guide explains what compliance teams must do, where risk concentrates, and how to operationalize disclosure so your ads pass scrutiny—before they go live.

Political deepfake ad compliance: what counts as “synthetic” and when disclosure is triggered

Compliance starts with a clear definition of what your organization will treat as “synthetic” for political and advocacy advertising. In practice, disclosure duties are most often triggered by materially deceptive manipulated media—content that would likely mislead a reasonable viewer about what a real person said or did, or about the authenticity of an event.

For internal policy, define a “deepfake” broadly as AI-generated or AI-altered media that depicts a real person’s likeness, voice, or actions in a way that appears authentic. Then specify what “material” means in ad review terms:

- Identity manipulation: face swaps, voice cloning, or avatar-based impersonation of a candidate, officeholder, election official, journalist, or community leader.

- Statement fabrication: synthetic speech or video that creates a false quote or endorsement.

- Context manipulation: editing that changes meaning (e.g., splicing to imply support/opposition), especially when paired with realistic generative enhancements.

- Event fabrication: AI-generated scenes of violence, ballot tampering, arrests, or “breaking news” that never happened.

Not every use of AI triggers the same obligations. Many compliance programs carve out low-risk uses where no reasonable viewer would be misled, such as:

- Purely stylistic generation (illustrations, generic scenery) with no real-person depiction and no fabricated event claims.

- Accessibility edits (captions, translation) that do not alter the substantive message.

- Clearly labeled satire/parody, though disclosure may still be required depending on jurisdiction and platform rules.

The practical takeaway: if the ad uses AI to create realism about a real person or real-world event, assume disclosure is required and document your decision. If you rely on an exception (satire, non-material use), memorialize the rationale and ensure the creative is unmistakably non-deceptive.

Election advertising disclosure requirements: the regulatory landscape and who must comply

In 2025, “deepfake disclosure” obligations can arise from multiple sources at once: election laws, consumer protection and unfair/deceptive practices standards, defamation and right-of-publicity doctrines, and platform policies. Most campaigns underestimate the cross-over risk: an ad can be “legal” under one regime but still rejected by a platform or expose the sponsor to civil claims.

Who is responsible? Treat compliance as shared liability among:

- Sponsors: candidates, committees, parties, PACs, advocacy organizations, and issue coalitions.

- Publishers and platforms: broadcasters, streaming services, social networks, and programmatic ad exchanges that impose their own disclosure and verification rules.

- Vendors: ad agencies, production studios, AI tool providers, and media buyers who may create or place synthetic assets.

How rules typically work: jurisdictions that regulate political deepfakes generally focus on (1) material deception and (2) timing near elections. Even where statutes differ, compliance patterns converge:

- Mandatory labeling for synthetic or manipulated content used to influence voters.

- Prohibitions on distributing deceptive synthetic media under certain conditions (for example, close to an election, or involving election administration).

- Required disclaimers identifying the sponsor and clarifying the nature of the manipulation.

Answering the question you will get from leadership: “Can we just follow platform policy?” Platform compliance is necessary but not sufficient. Platforms may allow content that a local law restricts, and they can also require disclosures beyond what law mandates. Build a unified “highest standard” playbook for the jurisdictions you target and the platforms you plan to use.

AI-generated political ad disclaimer: what it must say, where it must appear, and how to make it unmissable

An effective disclaimer has two jobs: (1) inform the viewer clearly and (2) survive review by regulators, platforms, and opponents. In 2025, the safest approach is plain-language disclosure that is unavoidable and proximate to the synthetic element.

What to say (model language): Use direct wording that an ordinary viewer understands. Examples that generally reduce risk:

- Video: “This video contains AI-generated or digitally altered content.”

- Voice: “Audio in this ad was generated using AI.”

- Depiction of a person: “This is a synthetic depiction; the person shown did not say or do this.”

- Satire (if applicable): “Parody: scenes and audio are digitally created.”

Where to place it:

- On-screen text during the portion containing the synthetic content, not only at the end card.

- Audio disclosure for radio, podcasts, and any ad where the misleading element is primarily auditory (e.g., a cloned voice).

- Ad description/metadata when platforms provide a disclosure field; treat this as additive, not a substitute for in-ad labeling.

How to format it: Compliance teams often fail on legibility and duration. Adopt internal minimums that exceed “barely visible” practices:

- Readable contrast (high-contrast text over a solid or blurred background strip).

- Sufficient duration so it can be read once at normal speed, without pausing.

- Plain language (avoid legal jargon like “digitally synthesized audiovisual representation” unless required).

Common pitfall: Disclosing only that “images were dramatized” when the ad uses a candidate’s synthetic voice or face. Match the disclosure to the actual manipulation. Reviewers look for specificity.

Deepfake political advertising penalties: enforcement, takedowns, and reputational risk

Teams often focus narrowly on fines, but the largest operational risk is usually speed: takedowns and public corrections can derail message timing, fundraising, and early-vote targeting. In 2025, enforcement pressure comes from multiple directions:

- Regulators: civil penalties, injunctions, and expedited processes in election-sensitive windows.

- Platforms: ad rejection, account restrictions, mandatory identity verification, and transparency library annotations.

- Opponents and watchdogs: rapid-response complaints, public attribution, and litigation threats.

- Media and fact-checkers: reputational harm that persists even after an ad is pulled.

What triggers escalated scrutiny:

- Impersonating a candidate or official in a way that appears to concede, endorse, threaten, or admit wrongdoing.

- Claims about voting procedures paired with synthetic “official” voice/video.

- Fabricated crises (violence, ballot destruction, arrests) presented as real footage.

- High-spend distribution with microtargeting, especially if the content is difficult to discover publicly.

How to mitigate penalties and fallout: Build a documented compliance record that shows intent to inform, not deceive. Keep:

- Source files and edit logs for each asset.

- Tooling documentation (what AI system was used, what prompts/settings, and who operated it).

- Legal and platform review notes showing why the disclosure is adequate.

- Distribution records (platforms, dates, targeting parameters) to support fast remediation if needed.

This documentation supports faster appeals, better outcomes in platform disputes, and a credible narrative if a regulator or journalist asks questions.

Synthetic media transparency policy: building a repeatable compliance workflow for campaigns and advocacy groups

Disclosure compliance is not a last-minute text overlay; it is a workflow. The strongest programs treat synthetic media as a controlled asset class with review gates from concept to post-launch monitoring.

1) Intake and classification

- Require creators and vendors to answer: “Did you use AI to generate or alter any audio, video, or images?”

- Classify the asset: real-person depiction, voice, event footage, illustrative/stylized.

2) Risk assessment tied to purpose

- Is the ad political (candidate/election) or advocacy (issue-based)? Both can trigger rules and platform policies.

- Could a reasonable viewer think the depicted person actually spoke/acted that way?

- Is the content likely to be shared out of context?

3) Disclosure design and QA

- Create pre-approved disclaimer templates for common formats (15s, 30s, 6s bumpers, radio).

- Test visibility on mobile, muted playback, and vertical placements.

- Ensure disclosures remain intact after platform compression or cropping.

4) Platform readiness

- Use platform disclosure tools (where available) and keep screenshots of submission fields.

- Confirm ad category settings (political/issue) and identity verification status before launch.

5) Post-launch monitoring and rapid response

- Monitor comments and reports for “deepfake” allegations; treat them as early warnings.

- Maintain a kill-switch plan: who can pause spend, who files appeals, who publishes clarifications.

Vendor controls you should require: Contract clauses obligating vendors to disclose AI use, preserve project files, follow your labeling standards, and indemnify for undisclosed synthetic manipulation. Also require vendors to avoid training models on your campaign data unless explicitly authorized.

Platform rules for deepfake political ads: reconciling ad policies, verification, and transparency libraries

Even if your disclosure complies with applicable law, platforms can impose stricter standards. In 2025, major platforms and ad networks commonly require some combination of identity verification, political/issue ad authorization, and special labeling for manipulated media.

What to expect from platforms:

- Identity verification and authorization: delays are common; start weeks ahead of major flights.

- Disclosure fields: some platforms ask whether content is digitally altered or AI-generated; answer truthfully and keep internal records.

- Automated detection and human review: synthetic assets can trigger false positives; be ready with documentation and a consistent explanation.

- Public transparency libraries: your ad and sponsor information may be visible; mismatches between your in-ad disclaimer and library notes can raise suspicion.

Operational advice: Standardize disclosures across placements so viewers see consistent messaging whether the ad runs on TV, streaming, social, or programmatic. If a platform’s character limits constrain what you can say in metadata, prioritize in-ad disclosure and link to a landing page that explains your use of synthetic media in plain language.

Answering a common follow-up: “What about influencer content?” If creators post paid political or issue advocacy content, treat them like a distribution channel. Provide them with required disclosure language, require pre-approval, and ensure they use both platform-native paid partnership tools and your synthetic media disclaimers when AI is involved.

FAQs

Do we need a disclosure if we only used AI to clean up audio or remove background noise?

Usually not, if the edit does not materially change what was said or imply a different speaker. Document the edit type and confirm it does not create a misleading impression about identity or meaning.

Does satire or parody still require a deepfake disclosure?

Often yes. Even when parody is protected, platforms and some jurisdictions may still require clear labeling to prevent confusion. If you rely on parody, make it unmistakable and add a direct “parody/synthetic” notice.

What if we use an AI-generated actor who is not a real person?

Risk is lower, but disclosure may still be required by platform policy or if the ad implies real footage of real events. If the synthetic actor could be mistaken for a real candidate or official, treat it as high risk and disclose.

How should we disclose a cloned voice in a radio ad?

Use an audio disclaimer spoken clearly near the beginning or immediately before/after the cloned segment, such as “Audio in this message was generated using AI.” If the voice imitates a real person, add that it is a synthetic depiction.

Can we place the disclosure only in small print at the end?

That approach commonly fails platform review and increases legal risk. Place the disclosure during the manipulated segment, ensure it is readable on mobile, and add an audio disclosure when the manipulation is primarily auditory.

What records should we keep to defend our compliance?

Keep source files, edit timelines, tool and prompt logs (when available), vendor attestations, internal review approvals, screenshots of platform submission answers, and distribution logs. These enable fast responses to takedowns, appeals, and inquiries.

Disclosure compliance for deepfake and synthetic political content is now a baseline expectation in 2025, not an optional safeguard. Build definitions that capture real-person and event deception, label synthetic elements where viewers encounter them, and align with both law and platform policy. The winning strategy is operational: standardized templates, documented reviews, and rapid monitoring that prevent surprises when timing matters most.