Reviewing digital twin platforms for predictive product design audits has become a strategic priority for engineering, quality, and operations leaders in 2026. As products grow more connected, regulated, and software-defined, teams need better ways to detect design risk before launch. The right platform can reveal hidden failure patterns, shorten validation cycles, and sharpen decisions across the product lifecycle. What should you look for?

Why digital twin software matters for predictive product design audits

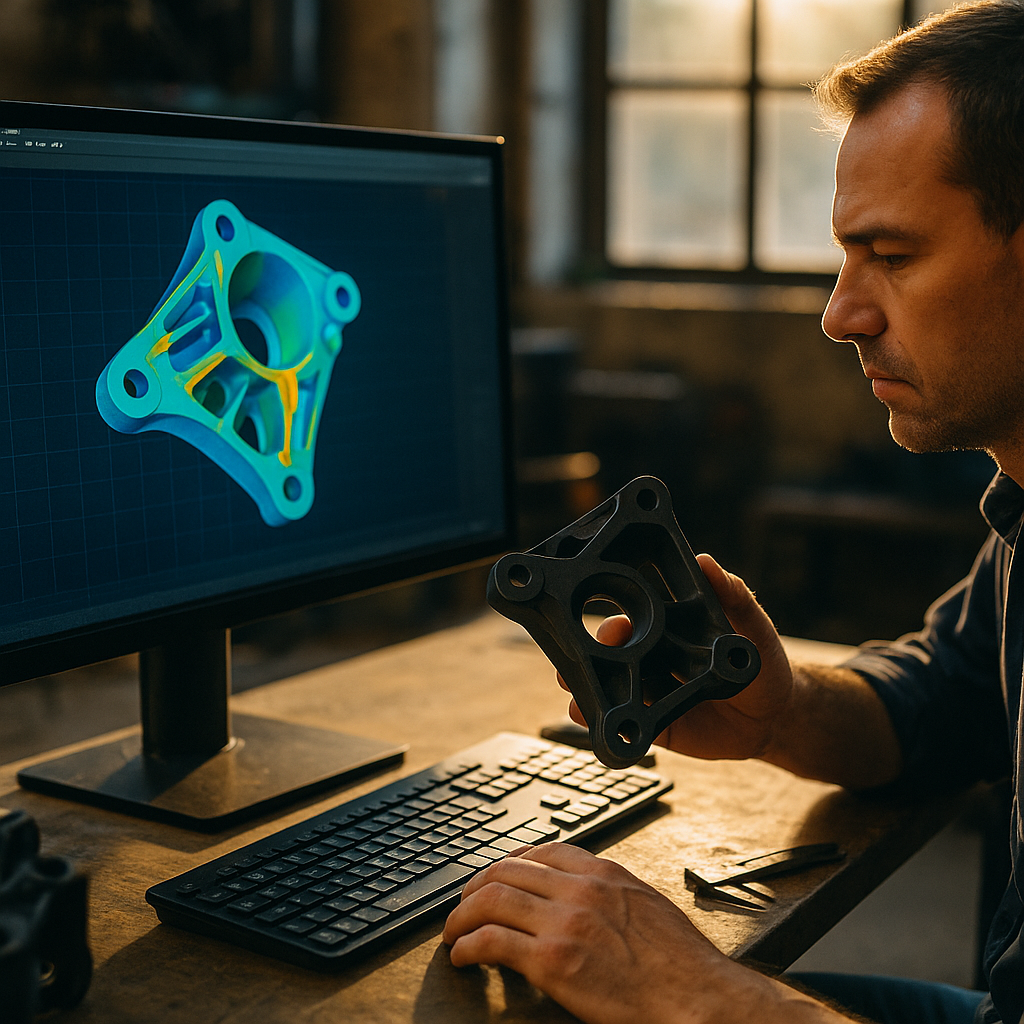

Digital twin software creates a dynamic virtual representation of a product, subsystem, or production environment using engineering models, sensor data, test results, and operational feedback. In a predictive product design audit, that virtual model helps teams evaluate whether a design is likely to meet performance, safety, reliability, compliance, and serviceability targets before issues appear in the field.

This matters because conventional design reviews often depend on isolated spreadsheets, late-stage physical testing, and assumptions that age quickly. A strong digital twin platform closes those gaps. It links CAD, CAE, PLM, IoT, and field data so engineers can simulate realistic conditions, compare expected versus actual behavior, and identify failure modes earlier.

From an EEAT standpoint, buyers should favor platforms that demonstrate:

- Experience: Proven use in real product environments, not only in lab demos.

- Expertise: Deep support for engineering simulation, systems modeling, and lifecycle traceability.

- Authoritativeness: Adoption in regulated and quality-sensitive industries such as automotive, medtech, aerospace, electronics, and industrial equipment.

- Trustworthiness: Transparent security, data governance, model versioning, and audit trails.

If your goal is predictive design auditing, do not judge platforms on visualization alone. Impressive 3D views are useful, but they are not enough. The platform must support traceable decisions: why a design passed, where risk remains, what assumptions drove the model, and which changes improve outcomes fastest.

Core platform evaluation criteria in a product design audit platform

When reviewing any product design audit platform, focus on how well it supports risk prediction and decision quality across the full design lifecycle. The best evaluations use a weighted scorecard built around business-critical outcomes, not marketing claims.

Start with model fidelity. Can the platform represent physics, material behavior, tolerances, embedded software interactions, and environmental stress accurately enough for your use case? A consumer wearable, electric drivetrain, infusion pump, and packaging robot each require different levels of model complexity. High fidelity without practical usability is costly, but low fidelity can create false confidence.

Next, assess data integration. A predictive audit depends on more than simulation. The platform should ingest and reconcile data from:

- CAD and CAE tools

- PLM and requirements systems

- Manufacturing execution and quality systems

- IoT and telemetry streams

- Test benches and validation labs

- Service, warranty, and field failure databases

Then examine traceability. Audits require evidence. You should be able to connect a requirement to a model, a simulation output to a risk register, and a design change to a predicted improvement. If the platform cannot maintain that chain clearly, it will struggle in regulated environments and executive reviews alike.

Scenario testing is another critical capability. A useful platform lets teams stress a design under expected and edge-case conditions: thermal loads, vibration, battery degradation, user misuse, component substitutions, and supply-chain-driven variation. That supports more realistic audits than pass/fail reviews based only on nominal assumptions.

Finally, evaluate usability for cross-functional teams. Predictive product design audits are rarely owned by engineering alone. Quality leaders, compliance teams, operations managers, product owners, and service organizations all need access to the right level of insight. The strongest platforms provide role-based dashboards, explainable results, and collaboration features without forcing every stakeholder into specialist workflows.

Key predictive maintenance analytics capabilities that improve audit accuracy

Although predictive maintenance analytics is often discussed in operations, it also plays a major role in product design audits. Why? Because service and reliability data reveal how products actually age, fail, and behave in uncontrolled environments. When those patterns flow back into the twin, audits become far more predictive.

Look for platforms that combine simulation with condition-based and historical performance analytics. This helps teams move beyond static design validation and toward a continuously learning audit model. For example, if field sensors show repeated stress spikes under usage patterns not captured in original testing, the twin can expose a design margin problem early in the next revision cycle.

Important analytics capabilities include:

- Anomaly detection: Identifies deviations from expected behavior that may signal emerging design weaknesses.

- Failure mode prediction: Uses field and test data to estimate where and when failures are likely.

- Remaining useful life modeling: Helps teams understand durability under variable real-world conditions.

- Root cause correlation: Connects material choices, geometry, software logic, environmental factors, and manufacturing variability.

- Closed-loop learning: Feeds service and warranty insights back into design controls and validation plans.

The best platforms also make these analytics understandable. Black-box forecasts are hard to trust during an audit. Teams need explainability: which variables mattered most, how model confidence was measured, and where the data is thin or biased. This is especially important when audit findings may delay launch, trigger redesign, or influence supplier decisions.

A practical warning: predictive accuracy depends on data quality. If your telemetry is sparse, your service coding is inconsistent, or your test data is fragmented across business units, even a powerful platform will struggle. In those cases, platform selection should include a realistic plan for data cleanup, governance, and staged rollout.

How simulation-driven design tools support compliance and quality assurance

Simulation-driven design tools are central to modern predictive audits because they let teams evaluate performance before expensive physical builds and before quality issues become customer issues. But for audits, the value goes further than speed. These tools create repeatable, evidence-based workflows that support compliance, design control, and internal quality assurance.

In regulated or safety-critical sectors, reviewers should ask whether the platform supports validation of the digital twin itself. A model is only useful if its assumptions, calibration, and intended use are documented. Strong platforms make this manageable through version control, model governance, approval workflows, and clear records of who changed what and why.

Quality and compliance teams should review these capabilities closely:

- Verification and validation workflows for models and simulation outputs

- Change management linked to design revisions, test outcomes, and nonconformance findings

- Requirements mapping from design intent to test evidence and audit conclusions

- Electronic records and audit trails that support internal and external review

- Risk-based reporting for FMEA, hazard analysis, and corrective action planning

Another differentiator is how well the platform handles hybrid evidence. In real audits, no team relies on simulation alone. Decision-makers combine virtual tests, bench results, manufacturing capability data, supplier information, and field observations. The most useful digital twin environments bring that evidence together in one governed context rather than leaving teams to reconcile it manually.

This is where buyer discipline matters. A platform may excel at high-end simulation but offer weak quality workflows. Another may shine in documentation yet lack real predictive depth. The right choice depends on whether your top priority is concept-stage optimization, compliance-heavy design control, fleet-informed reliability improvement, or enterprise-wide lifecycle visibility.

Comparing industrial IoT platforms and engineering ecosystems

Many buyers evaluate digital twins through the lens of industrial IoT platforms, and that makes sense. IoT connectivity provides the operational data that keeps a twin current. Still, predictive product design audits require more than device dashboards and telemetry pipelines. The strongest solutions bridge operational technology and engineering systems instead of treating them as separate worlds.

When comparing vendors, ask whether the platform is primarily:

- IoT-first: Strong in device connectivity, streaming data, fleet visibility, and alerting

- Engineering-first: Strong in multiphysics simulation, design analysis, and model-based systems engineering

- Lifecycle-first: Strong in PLM, requirements traceability, and enterprise governance

- Composable: Built to integrate best-of-breed systems through APIs and data layers

None of these approaches is automatically superior. The right fit depends on your current maturity. If your organization already has robust engineering tools but weak product usage feedback, an IoT-heavy platform may unlock more audit value. If you collect abundant field data but cannot connect it to design decisions, an engineering-centered twin may be the smarter investment.

Integration architecture deserves close attention. Ask vendors to demonstrate real workflows, not slideware. For example:

- A field issue is detected in connected products.

- The signal is classified and linked to a subsystem.

- The twin updates with operational context.

- Engineers simulate alternative design responses.

- Quality reviews the predicted risk reduction.

- A change order is created with traceable evidence.

If the vendor cannot show that flow clearly, predictive auditing may remain fragmented after deployment. Also review scalability, data residency options, cybersecurity controls, identity management, and model execution costs. In 2026, cloud flexibility is expected, but many sectors still need hybrid or edge-aware architectures for performance, privacy, and compliance reasons.

Best practices for digital transformation in manufacturing with digital twins

Digital transformation in manufacturing often fails when companies treat digital twins as a software purchase rather than an operating model change. For predictive product design audits, success depends on governance, cross-functional ownership, and a phased implementation plan tied to measurable outcomes.

Begin with a narrow, high-value use case. Good candidates include recurring warranty failures, delayed validation cycles, high-cost design rework, supplier-related performance variability, or audit-heavy product lines. A focused pilot helps you validate data readiness, model assumptions, team workflows, and ROI before scaling.

Follow these best practices:

- Define the audit objective first. Be specific: reduce design escapes, improve reliability prediction, shorten compliance review time, or cut prototype iterations.

- Choose measurable KPIs. Track metrics such as defect escape rate, time to root cause, test-to-launch cycle time, warranty claim frequency, and redesign cost.

- Create a model governance framework. Assign ownership for validation, recalibration, approvals, and retirement of outdated models.

- Connect service data back to engineering. This closed loop is what makes audits genuinely predictive.

- Train non-specialists. Quality, product, and executive stakeholders need to interpret outputs correctly.

- Plan for interoperability. Avoid locking critical evidence in one vendor silo if your environment is multi-system by design.

Organizations should also ask a practical question: who will maintain the twin after implementation? Many initiatives lose value because they launch as innovation projects without long-term process ownership. Sustainable programs usually involve a shared model across engineering, quality, manufacturing, and service teams, backed by clear data stewardship.

Vendor review should include customer references with similar complexity, product classes, and compliance demands. Ask those references what happened after year one: Did the platform improve decision speed? Did audit findings become more actionable? Were integrations stable? Was model maintenance manageable? These answers are often more revealing than feature lists.

Ultimately, the best digital twin platform is the one that improves product decisions before defects become expensive realities. It should help your teams see around corners, not simply document what already happened.

FAQs about digital twin platforms for predictive product design audits

What is a predictive product design audit?

A predictive product design audit evaluates likely performance, reliability, safety, and compliance outcomes before or during development by combining simulation, engineering data, and real-world feedback. Instead of reviewing designs only after failures or late testing, teams use a digital twin to identify probable risks earlier.

How is a digital twin different from a standard simulation model?

A standard simulation model is often static and used for a specific analysis. A digital twin is typically broader and continuously informed by design data, operational inputs, test results, and lifecycle changes. That makes it more useful for ongoing audits, monitoring, and design improvement.

Which industries benefit most from these platforms?

Industries with complex products, strict quality requirements, or connected devices benefit most. Common examples include automotive, aerospace, industrial equipment, electronics, energy systems, medtech, and advanced manufacturing.

What features are essential in platform reviews?

Look for model fidelity, strong integrations, traceability, scenario testing, predictive analytics, security controls, governance workflows, and role-based reporting. If you operate in a regulated setting, audit trails and model validation support are essential.

Can small or mid-sized manufacturers use digital twin platforms effectively?

Yes, if they start with a focused use case and select a platform that matches their data maturity. Smaller teams often succeed by targeting one costly design or quality problem first rather than attempting an enterprise-wide rollout immediately.

How do digital twins improve compliance readiness?

They centralize evidence, document assumptions, track changes, and link requirements to test and simulation outputs. This creates a clearer record for internal quality reviews and external audits while reducing manual reconciliation work.

What are the main implementation risks?

The biggest risks are poor data quality, weak governance, unclear ownership, overcomplicated pilots, and buying a platform that excels in one area but does not fit your actual audit workflow. A disciplined pilot with clear KPIs reduces these risks significantly.

How long does it take to see value?

Organizations often see early value once a pilot connects one high-priority product issue to usable simulation and field feedback. Broader strategic value takes longer because integrations, governance, and process adoption must mature. The timeline depends on data readiness and organizational complexity.

Reviewing digital twin platforms for predictive product design audits requires more than a feature checklist. The right platform connects engineering models, operational data, quality evidence, and governance into one trustworthy decision system. Prioritize traceability, predictive accuracy, and usable workflows over flashy visuals. In 2026, the winners are organizations that turn design audits from reactive reviews into continuous, evidence-led risk prevention.

Top Influencer Marketing Agencies

The leading agencies shaping influencer marketing in 2026

Agencies ranked by campaign performance, client diversity, platform expertise, proven ROI, industry recognition, and client satisfaction. Assessed through verified case studies, reviews, and industry consultations.

Moburst

-

2

The Shelf

Boutique Beauty & Lifestyle Influencer AgencyA data-driven boutique agency specializing exclusively in beauty, wellness, and lifestyle influencer campaigns on Instagram and TikTok. Best for brands already focused on the beauty/personal care space that need curated, aesthetic-driven content.Clients: Pepsi, The Honest Company, Hims, Elf Cosmetics, Pure LeafVisit The Shelf → -

3

Audiencly

Niche Gaming & Esports Influencer AgencyA specialized agency focused exclusively on gaming and esports creators on YouTube, Twitch, and TikTok. Ideal if your campaign is 100% gaming-focused — from game launches to hardware and esports events.Clients: Epic Games, NordVPN, Ubisoft, Wargaming, Tencent GamesVisit Audiencly → -

4

Viral Nation

Global Influencer Marketing & Talent AgencyA dual talent management and marketing agency with proprietary brand safety tools and a global creator network spanning nano-influencers to celebrities across all major platforms.Clients: Meta, Activision Blizzard, Energizer, Aston Martin, WalmartVisit Viral Nation → -

5

The Influencer Marketing Factory

TikTok, Instagram & YouTube CampaignsA full-service agency with strong TikTok expertise, offering end-to-end campaign management from influencer discovery through performance reporting with a focus on platform-native content.Clients: Google, Snapchat, Universal Music, Bumble, YelpVisit TIMF → -

6

NeoReach

Enterprise Analytics & Influencer CampaignsAn enterprise-focused agency combining managed campaigns with a powerful self-service data platform for influencer search, audience analytics, and attribution modeling.Clients: Amazon, Airbnb, Netflix, Honda, The New York TimesVisit NeoReach → -

7

Ubiquitous

Creator-First Marketing PlatformA tech-driven platform combining self-service tools with managed campaign options, emphasizing speed and scalability for brands managing multiple influencer relationships.Clients: Lyft, Disney, Target, American Eagle, NetflixVisit Ubiquitous → -

8

Obviously

Scalable Enterprise Influencer CampaignsA tech-enabled agency built for high-volume campaigns, coordinating hundreds of creators simultaneously with end-to-end logistics, content rights management, and product seeding.Clients: Google, Ulta Beauty, Converse, AmazonVisit Obviously →